“Can you take a look at the service for me?”

If you’ve ever heard that in the middle of a sprint, incident, or code review… you know how frustrating that sentence can be.

Check what? Based on what? Just because the API returned 200 and the front-end buttons rendered, does that really mean everything is healthy?

We need to stop guessing. And start defining what a healthy system actually means.

That’s where the famous (and often ignored) concepts come in:

SLI, SLO and SLA

What are SLI, SLO and SLA? (With real examples)

SLI – Service Level Indicator

It’s the raw metric you’re observing. Example:

| SLI Name | What it measures | Practical example |

|---|---|---|

| Error rate | % of HTTP requests returning errors | Requests to /checkout with 5xx status |

| Availability | % of time an endpoint is accessible | Availability of /api/auth/login |

| Latency | Response time of requests | Time to receive response from /payment/process |

| Throughput | Volume of processed requests | Number of requests/sec on /orders/list |

| Saturation | Usage of critical system resources | Kafka order queue above 80% occupancy |

SLO – Service Level Objective

It’s the internal technical agreement about the minimum acceptable value for that SLI. Example:

| SLI | Suggested SLO |

|---|---|

Error rate of /checkout | Less than 0.1% 5xx errors over 30 days |

Availability of /auth/login | 99.95% monthly uptime |

Latency on /payment/process | 95% of responses under 300ms |

Throughput on /orders/list | At least 100 sustained RPS without errors |

| Kafka payment queue saturation | Queue must never exceed 80% for more than 5 consecutive minutes |

SLA – Service Level Agreement

It’s the formal agreement with a client or business team. Example:

“If monthly availability of /auth/login drops below 99.5%, there will be a contractual penalty of X dollars.”

We won’t go deep into SLAs here, because the focus is helping you build real visibility and strong technical agreements within your team using SLI + SLO.

A Practical Example (With Template)

Imagine you have a payment API running in containers on Kubernetes. Below is a table with real SLI/SLO examples you can use as a base to implement observability + alerts.

| Metric (SLI) | Objective (SLO) | Where to measure | Alert Type | Priority |

|---|---|---|---|---|

HTTP 5xx error rate on /payment/checkout | < 0.1% over last 7 days | API Gateway / APM (Datadog, Prometheus, etc.) | Alert if > 0.1% for 15 min | High |

Latency on /payment/process | 95% < 300ms (p95) | Distributed tracing + Logs | Alert if p95 > 300ms for 5 min | High |

Availability of payment-api container | 99.95% monthly | Kubernetes healthcheck + Prometheus | Alert if container crashing/restarting | High |

| CPU usage of container | < 70% sustained | Prometheus / Grafana | Alert if > 70% for 10 min | Medium |

| Memory usage of container | < 75% sustained | Prometheus / Grafana | Alert if > 75% for 10 min | Medium |

| Kafka payment queue usage | < 80% buffer | Kafka Exporter + Prometheus | Alert if > 80% for 10 min | High |

| Kafka backlog | < 1,000 delayed messages | Kafka metrics | Alert if backlog grows for 10 min | High |

| Database availability | > 99.9% weekly | DB Proxy or APM monitoring | Alert if unavailable for > 1 min | High |

Auth request error rate (/auth) | < 0.2% over 7 days | API Gateway or Auth Service | Alert if spike > 0.2% for 15 min | High |

| Total service throughput | Sustain > 100 RPS stably | APM + Load Balancer metrics | Alert if abrupt drop in throughput | High |

| Internal job queue time (e.g., invoice generation) | < 1s average | Job runner metrics or Prometheus | Alert if average > 1s for 5 min | Medium |

p99 latency on /refunds | < 500ms | APM or tracing | Alert if p99 > 500ms for 10 min | Medium |

| External call errors (e.g., payment gateway) | < 0.5% | Circuit breaker + logs | Alert on spike or constant timeout | High |

| Automatic retry rate | < 2% of requests | Retry middleware | Alert if > 2% for 15 min | Low |

| Event deserialization/parse errors | 0 invalid events | Kafka consumer + logs | Alert if invalid event received | High |

Now it’s on you:

The table above is just an example. Every system has its own characteristics, critical points, and specific needs.

What you can (and should) do now:

- Pick one microservice from your system

- List its main endpoints and responsibilities

- Define 5 to 10 real SLIs that represent what “health” means there

- For each SLI, define an SLO

- Configure monitoring and alerts based on those SLOs

- Share it with the team (it’s useless if only you know it)

To wrap up: what if this became culture?

Now imagine this…

You have all your SLIs clearly defined, your SLOs visible on a dashboard, alerts configured with clear criteria — and the whole team knowing exactly what a healthy system looks like.

It would be much easier to make decisions, right?

- Knowing when it’s time to act (and when it’s not)

- Having clarity about system health without relying on assumptions

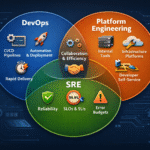

- Avoiding repetitive and meaningless work (the famous toil work from SRE principles)

- Focusing on what really matters: delivering value with stability

SLO is not meant to become a forgotten spreadsheet. It’s meant to be used every single day as the compass for reliability.

Want to learn MORE?

honestly? I don’t think you should be doing all of this manually anymore.

I’m about to launch a new training called “SRE Efficient: How AI Transforms Reliability Engineering”, and in one of the classes I show exactly how you can use LLMs to help you define SLIs, SLOs — and even generate incident summaries in minutes.

Yes. Minutes.

If you want to see how AI can amplify your reliability practice instead of just generating alerts and dashboards, stay tuned.

More details coming very soon. 🚀