What are the “Four Golden Signals”? Quality Monitoring

You’re drowning in metrics. Your monitoring dashboard has 47 panels. You’re getting paged for CPU spikes at 3 AM. And when something actually breaks, you still don’t know where to start looking.

Sound familiar?

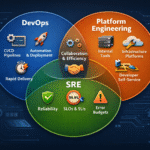

This is exactly the problem the “Four Golden Signals” solve. These four metrics—Latency, Traffic, Errors, and Saturation—form the foundation of effective SRE monitoring. They’re not fancy. They’re not new. But they work because they answer the question every operator asks: “What’s actually broken?”

The Four Golden Signals: Your Monitoring North Star

The Four Golden Signals come from Google’s Site Reliability Engineering book and represent the core metrics you must monitor in any system, regardless of complexity. They’re not about perfecting your observability stack—they’re about starting with what matters.

Think of them as the four vital signs of your infrastructure.

Signal 1: Latency — How Long Are Things Taking?

Latency is the time your system takes to respond to a request. It’s the most visible metric from a user’s perspective: How long does it take to load a page? How long to execute a database query? How long for your API to respond?

Latency is tricky because it’s not uniform. You have:

- Internal latency: Function calls, database queries, cache lookups

- External latency: Network calls to third-party services, downstream APIs

- Request path latency: The end-to-end time from request arrival to response delivery

From an SRE perspective, latency connects directly to SLOs. If your SLO says “p99 latency < 200ms,” you need to monitor that p99 continuously. If you’re consistently hitting 250ms, you’re burning through your error budget fast—and users notice.

How to start: Pick your critical user journeys (login, search, checkout). Measure their latency at the p50, p95, and p99. Tools like AWS X-Ray, Datadog APM, or New Relic give you this granularity without guessing.

Signal 2: Traffic — How Much Load Are You Handling?

Traffic measures the volume flowing through your system: How many requests per second? How many database inserts? How many messages in your queue?

Traffic is your early warning system. A sudden spike in traffic can explain a cascade of failures:

- High latency? Check traffic. Maybe you’re getting 10x normal load.

- Database struggling? Check traffic. Maybe an agent started generating garbage requests.

- Memory usage climbing? Check traffic. Maybe someone misconfigured a retry loop.

From an SRE perspective, traffic patterns tell you whether you’re within capacity. If your system can handle 1,000 req/s but you’re now seeing 2,000 req/s, saturation (your fourth signal) will follow. Understanding traffic lets you scale before you break.

How to start: Instrument key flows (API requests, database transactions, message publishing). Use rate-based metrics from Prometheus, CloudWatch, or Datadog. Alert on anomalies: “Request rate jumped 50% in 5 minutes.”

Signal 3: Errors — How Many Requests Are Failing?

Errors are requests that didn’t succeed. They come in forms:

- HTTP 4xx (client error) and 5xx (server error) responses

- Failed database transactions

- Exceptions in logs

- Timeouts on downstream services

Errors are the most direct signal that something is broken. But here’s the catch: not all errors are visible in HTTP status codes. You need to monitor:

- API error rates (4xx and 5xx)

- Application exception rates (check your logs)

- Failed transactions in your database

- Timeout rates on external service calls

From an SRE perspective, error rates feed directly into your SLI (Service Level Indicator). If your SLO is 99.9% availability and you’re at 99.5%, you’re burning error budget. Catching this early means you can act before breaching the SLO.

How to start: Query your logs for error patterns. Set up alerts: “Error rate > 1% for 5 minutes” or “5 errors in 1 minute on the checkout endpoint.” Use structured logging to make errors searchable.

Signal 4: Saturation — How Full Is Your System?

Saturation measures how much of your resources you’re using: CPU, memory, disk I/O, database connections, queue depth.

Saturation is the sneakiest signal because it’s a leading indicator of problems. High saturation doesn’t mean you’re broken yet—it means you’re about to be. When CPU hits 90%, latency climbs. When memory swaps, everything slows. When your connection pool maxes out, requests queue.

From an SRE perspective, saturation is tied to resilience. A system at 100% saturation has zero headroom to handle traffic spikes, failovers, or unexpected load. This violates the SRE principle of static stability—your system should degrade gracefully under load, not catastrophically.

How to start: Monitor the basics on every instance: CPU, memory, disk usage. Monitor application-level saturation: database connection pools, queue lengths, thread pools. Alert at 70-80% saturation, not 95%.

Putting It Together: Troubleshooting With the Four Signals

Here’s how they work together. A user complains: “The site is slow!”

- Check Latency: p99 latency is 800ms (normal is 200ms). ✓ Confirmed slow.

- Check Traffic: Requests per second are 2x normal. ✓ We’re under load.

- Check Errors: Error rate is 0.1% (normal). ✓ No widespread failures.

- Check Saturation: Database CPU is 85%, memory is 90%. ✗ The database is saturated.

Root cause: Database can’t keep up with query volume. Action: Scale the database, optimize slow queries, or add caching.

Without these four signals, you’re guessing. With them, you have a repeatable troubleshooting process.

Why These Four? Why Not More?

You might think: “But what about P50 latency? Memory leaks? Network drops?”

The Four Golden Signals work because they’re universal. Every system has latency, traffic, errors, and saturation. They’re simple enough that operators can remember them during incidents. And critically—they’re correlated. Most problems show up in multiple signals at once.

This aligns with SRE’s principle of simplicity: you don’t need a perfect observability stack to be effective. You need the right metrics, measured well, with clear thresholds.

Getting Started: A Minimal Implementation

You don’t need enterprise monitoring tools. Start here:

- Latency: Add timing logs (

console.time(),start = time.now()) to critical paths - Traffic: Count requests with a simple counter (

requests_totalin Prometheus) - Errors: Parse logs for errors and count them (

errors_total) - Saturation: Collect host metrics (CPU, memory) from your OS

Set alerts on each signal at sensible thresholds for your system. When an alert fires, check all four before acting.

Conclusion

The Four Golden Signals aren’t revolutionary. They’re not the latest monitoring trend. They’re durable because they answer the fundamental question of SRE: “Is my system healthy, and if not, where do I start looking?”

From an SRE perspective, these signals connect to everything:

- Latency feeds into SLOs and user satisfaction

- Traffic determines whether you’re within capacity

- Errors show direct impact on reliability

- Saturation reveals resilience and headroom for failure

Start measuring these four. Build alerts on them. Practice troubleshooting with them. You’ll find that 90% of your incidents become solvable with just these four metrics.

Stay tuned for deeper dives into building monitoring that actually helps you sleep at night.

Cheers,

Douglas Mugnos

MUGNOS-IT 🚀